January was the month of tech and invention but February is the month that brings the finest things towards accomplishment by getting the first step done. From typical washing machines to Steve Jobs and Charles Darwin’s birthday, so much has happened this month in the past.

Let’s scroll down and see what is new to learn about this month!

February 1, 2003

On this day, the Space Shuttle Columbia broke into pieces as it re-entered Earth 16 minutes before its landing in Florida at Kennedy Space Center in Florida, leading to the death of all seven members of the crew. It included Commander Rick Husband, Pilot William McCool, Payload Commander Michael Anderson, Mission Specialists Kalpana Chawla, David Brown, and Laurel Clark, and Payload Specialist Ilan Ramon.

Columbia was launched into space on January 16, 2003, for 16 days to conduct 80 experiments to conduct research on microgravity by using a Spacehab module in the shuttle’s payload bay.

During launch, about 82 seconds after liftoff, a piece of foam insulation broke off from the external fuel tank and got stuck at the left wing. It eventually damaged the thermal protection system but went unnoticed during the research work.

Upon reentry, as the Columbia descended, superheated air penetrated inside and led to the structural failure with caused catastrophe.

After this disaster, NASA suspended the Space Shuttle Program for 29 months which ended with the Space Shuttle Discovery on July 26, 2005.

February 3, 1690

Our wallets have notes of Rs. 100 to 1000, but this paper note did rule the world so easily.

Paper notes were first started in China by Tyang Dynasty but it was abandoned after 650 years. Later, during the 15th century, the West started it. Credit goes to British colonies and Britain’s war with France during the Glorious Revolution.

At that time, Massachusetts, in 1689, was an English Colony. Britain used the mint coins there prior but then stopped it. During war with France, they wanted Americans to fight for them in Canada but they rarely sent money for soldiers. So, eventually, the Massachusetts Bay Colony issued certificates that soldiers could redeem to get coins. That certificate was known as the bill of credit. The certificate was considered the first paper money in America.

February 3, 1821

How many of you have made memes and jokes about women being more in the medical field than men?

Well, this did not start so long ago. In the US, Elizabeth Blackwell was the first physician to earn a medical degree. She was born on February 3, 1821 in Bristol. She and her family moved to New York in 1831. There, she completed her education via private tutors. Blackwell decided to study medicine after her friend got sick.

She completed her degree from Geneva Medical College in 1849 and practiced it in London and Paris. Blackwell was a pioneer of preventive medicine and hygiene. She was the first woman to hold the chair of hygiene in a medical college. Aiming to bring more women towards medicine, she established the London School of Medicine for Women and the New York Infirmary for Women and Children.

Blackwell died on May 31, 1910, but she is still around us. Every year, the Elizabeth Blackwell Medal is awarded to women who made significant contributions to medicine.

February 5, 1914

Our brain is a factory of chemicals and we got its glimpse because of Alan Hodgkin, a British physiologist.

Hodgkin was born on February 5, 1914, in Oxfordshire. He was known for demonstrating the role of potassium and sodium ions in generating action potential to transmit nerve impulses. He did this experiment with Andrew Huxley in 1952 on giant squids for his research for which he won Nobel Prize in 1963.

He was the leading physiologist in the 20th century who conducted thorough research on vision, nerves, and muscles. During WWII, he was a member of the team that developed short-wave airborne radar that led to future successes in the Royal Air Force.

In his career, Alan Hodgkin held two eminent positions; he was the president of The Royal Society from 1970 to 1975 and the Master of Trinity College, Cambridge from 1978 to 1984. Alan died in December 1998 after a prolonged illness.

February 8, 1828

“Around the world in eighty days” has fascinated all of us in our teens.

Its author, Jules Verne, the father of science fiction, was born on February 8 in Nantes, France. In inheritance, he got his father’s (Pierre Verne) analytical mind and mom’s (Sophie Allotte de la Fuÿe) imaginative skills which could be seen in his books, especially in “From the Earth to the Moon.”

Verne was the eldest son of five siblings; his family expected him to continue his dad’s legacy in professional life but he opted for a career in literature, leaving an impact for centuries.

His work has been adapted to features and television series, such as “A Trip to the Moon,” and “Tonight, Tonight.” Jules Verne died on March 24, 1905, in Amiens, France yet his work is still remembered and read.

February 9, 1881

Boats and ships were not made in a decade but in a century, and to invent everything, getting a patent was the first step.

On this date, Robert Fulton got the second patent to develop and construct the steamboat, signed by President James Madison. He got the first patent on February 11, 1809, to improve the invention which President Jefferson signed.

The patented steamboat had a round trip of 300 miles from New York to Albany, completed in 62 hours. The mission was successful because of engine efficiency and hull construction that gave his steamboat an edge over past attempts which made it economically and commercially feasible.

Fulton’s steamboat started commercially in the early 19th century, leading him to be considered the father of the steamboat.

February 12, 1809

How many of you have friends or relatives who thought that we are ancestors of monkeys because of a poor understanding of the theory of evolution? Well, my teacher assumed the same. However, the truth is different, making me realize how genius Charles Darwin is.

This genius was born in February in Shrewsbury. He was a known biologist and geologist of his time who was remembered for the controversial concepts he introduced at that time such as natural selection or survival of the fittest. This concept says that successful organisms are those that change themselves with the environment to adapt to it or they fade.

Darwin’s theories led to the foundation of modern evolutionary biology which honed our understanding of how we, humans, have come to this world.

February 13, 1979

How many of your parents or yourself are facing loss of hair? Well, there is a medicine to solve this issue, minoxidil.

It all started on this day when pharmacologist Charles Chidsey, received a patent to cure male baldness. The patent was assigned to Upjohn. The company was for a long time working on medicine for ulcer treatment, minoxidil. They gave the medicine to Chidsey to study as they found it a solution to lower blood pressure.

While studying and experimenting with it, he noticed that it improved hair growth in the samples’ heads. Immediately, he consulted his colleagues, Dr Kahn, and Dr Grant, and found a solution to age long issue.

On December 10, 1971, Dr. Kahn and Dr. Grant informed about it to Upjohn and the company soon issued a patent. They called Chidsey as sole inventor which resulted in trouble which solved by giving royalties to all three observers.

Upjohn launched the product under the name of Rogaine which got FDA approved in 1988. However, the patent expired in 1996, leading other brands to launch the same product too. Yet, the three are still considered inventors of this amazing medicine which you can use too.

February 14, 1845

For many, February 14 is the day of love, but for science lovers who want to see all genders in STEM, it’s the birth date of Lydia Sesemann, the actual first woman to get PhD in chemistry in the world. However, her research work and experiences all vanished to a great degree that people have forgotten her, considering Julia V. Lermontova as the first doctoral woman.

Sesemann was born in Wiborg with an ancestral lineage from Germany. She completed her degree from the University of Zurich. On May 15, 1874, she was awarded a doctoral degree from the Second Section of the Faculty of Philosophy.

Although her thesis got appreciation, which made many expect her to go into a career, the records say something different. Records say that she was in Leipzig until 1882. During that time, she worked at Gustav Wiedemann’s Physical-Chemical Laboratory but there is nothing available about the work she did there.

Records revealed that she was in Lausanne for a while where she was in touch with the Société de l’Union chrétienne. There, she was also a teacher for a year at The Indicateur Vaudois in 1896.

Sesemann left Lausanne in 1907 and moved to Munich with her sister, Helene, and stayed there with her mother until her death on March 28, 1925. Lydia Sesemann was a forgotten scholar who was found recently. May we keep remembering her. She was an advocate of women’s rights and education.

February 17, 1827

We all have one fixed day for washing clothes but do we know who invented it? Well, let’s forget about invention and focus on who got the license first.

Chester Stone was the first person who get a patent for a washing machine on this date in New Haven County, Connecticut. The U.S. Patent Office issued him patent no. 4,669X but unfortunately, the details were destroyed during the Patent Office fire in 1836 at the Blodget’s Hotel.

The washing machine is the biggest invention in our houses; it’s one of the most useful things in our daily lives. Yet, there is little information about him. However, people know about his son, Marvin Chester Stone, the inventor of the drinking straw.

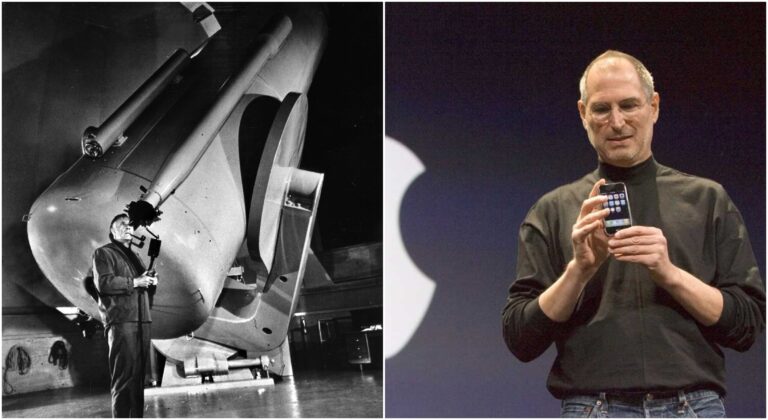

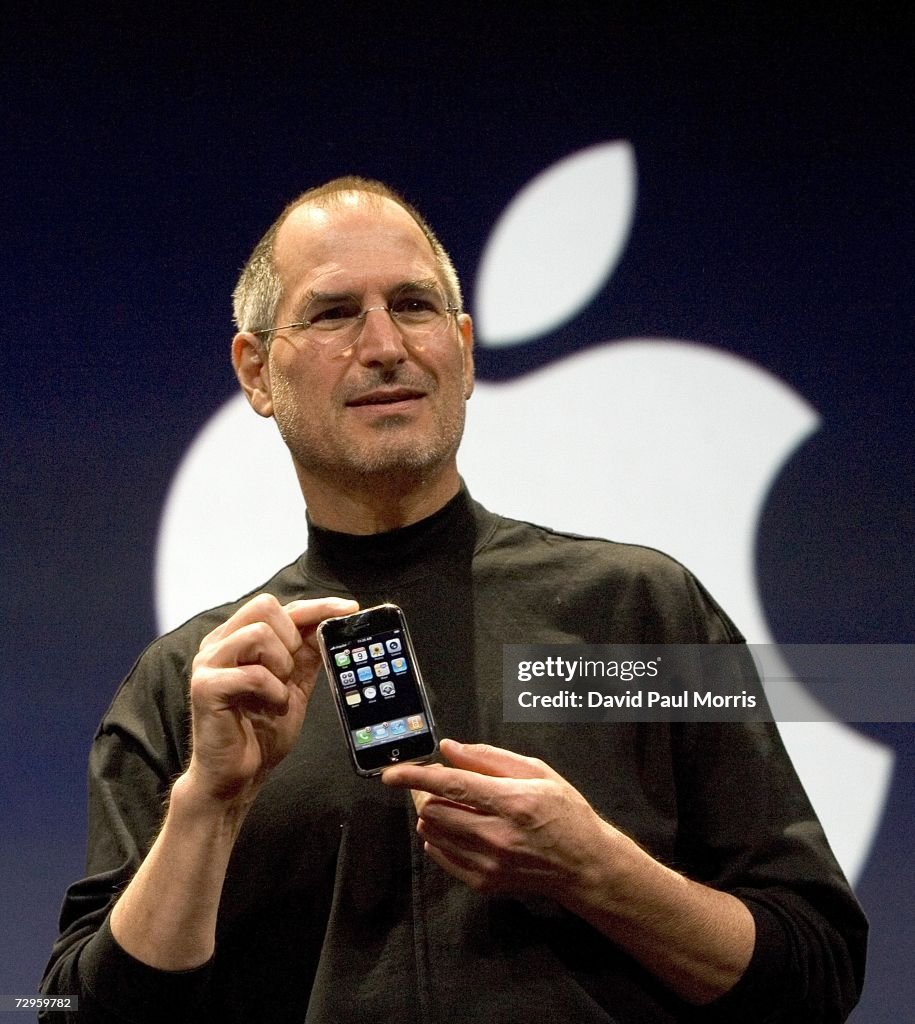

February 24, 1955

Personal computer history is incomplete without Steve Jobs and his birth date is a must-remember for Gen-Zs who dream of entrepreneurship, living a purposeful life, and enjoying every moment.

Steve Jobs was born in California to Abdulfattah Jandali and Joanne Schieble, yet Paul Jobs and Carla Jobs, Joanne’s friends, adopted him and raised him in Mountain View. As per the deal with his biological parents, Paul and Carla sent him to university, Reeds College but he dropped out.

Jobs explored different things in life from Buddhism to games and ended up in technology. During his childhood, he joined Explorer’s Club at Hewlett-Packard (HP) where he met his going-to-be business partner, Steve Wozniak. With Woz and Ronald Wayne, he started Apple in 1976 and launched the infamous Macintosh I, Macintosh II, and LISA.

Jobs played a key role in Pixar by becoming its CEO after getting ousted from his own company in 1985. During that time, he started NeXT too. Later, in 1997, he joined Apple again and launched the iPhone, iPad, and iPod, bringing success to the company. He died on October 5, 2011, leaving the legacy behind.

February 27, 1900

We all live on painkillers. Thanks to Felix Hoffman for taking the first step.

While working at Bayed, Hoffman synthesized a pure form of acetylsalicylic acid, the main ingredient in aspirin, in 1897. Eventually, Bayer requested Germany to give them a patent but they rejected it because the French did it first. So, they applied in the US and got the patent and freedom to manufacture it from 1900 to 1917.

Bayer named their product “aspirin” and started selling it but due to having a generic name, they lost their rights to the trademark. Many countries started producing it from the same name, leading them to face fierce competition. That competition also faded after the launch of paracetamol in 1956 and ibuprofen in 1962.

Yet, the efforts of Bayer and Hoffman went vane, leading to the availability of a variety of painkillers which means they were Jobs in their way.

February 28, 1953

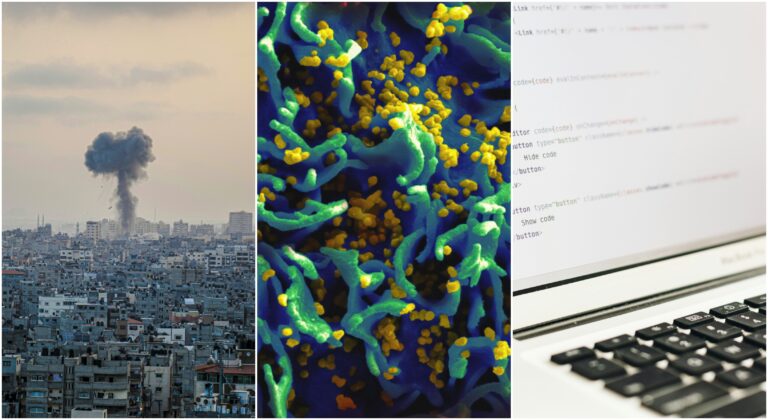

Funnily, many of our relatives have commented on what we have inherited in our DNA, yet that DNA concept and structure was discovered on the last day of February.

Thanks to James Watson and Francis Crick for showcasing Watson and Crick’s model in the form of a research paper, published in “Nature” in 1953.

Utilizing the research work and diffraction images of Rosalind Franklin and Maurice Wilkins, Watson, and Crick explained how two strands of DNA twist around each other and form a ladder with base pairs (adenine-thymine and guanine-cytosine) as the rungs. Via this structure, they explained how genetic information is passed down to generations.

For this work, Watson, Cric,k and Wilkins won the Nobel Prize in Physiology in 1962. Unfortunately, Franklin was missed out as she passed away before the event in 1958. This discovery may sound cliche to you but it paved the way for current biotechnology.

From beginning to end, from books to licenses and birthdays of geeks to doctoral degrees, February is like a treasure with so much inside it. What’s the most memorable event or date for you?

Reference:

https://www.space.com/19436-columbia-disaster.html

https://info.mysticstamp.com/wp-content/uploads/02-03-1690-First-Paper-Money.pdf

https://pmc.ncbi.nlm.nih.gov/articles/PMC11455243/

https://royalsocietypublishing.org/doi/10.1098/rsbm.1999.0081

https://www.wired.com/2012/02/feb-8-1828-a-literary-master-is-born/

https://www.rmg.co.uk/stories/topics/charles-darwin-one-britains-most-celebrated-naturalists

https://www.chemistryviews.org/lydia-sesemann-150-years-of-womens-doctorates-in-chemistry/

https://www.computerhistory.org/tdih/february/24/

https://www.bbc.co.uk/bitesize/articles/z4pd382

Read About January: Science Milestones in January: From Newton to NASA

:max_bytes(150000):strip_icc()/GettyImages-640486531-58f85b325f9b581d59f95106.jpg)

:max_bytes(150000):strip_icc():format(webp)/Pakicetus_Canada-5b5cb2fac9e77c005066db85.jpg)